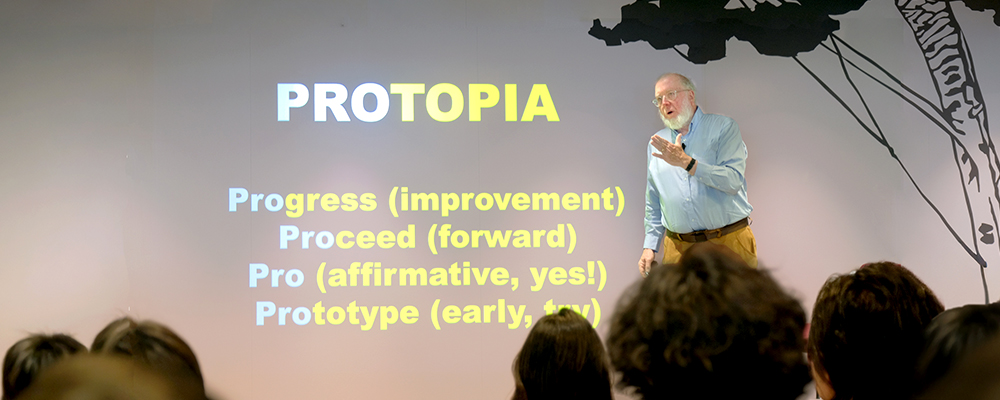

Kevin Kelly, Editor-in-Chief of WIRED magazine

With generative AI (artificial intelligence) evolving rapidly, we find ourselves confronted by some fundamental questions, such as what humanity’s role will be and whether we will be weeded out by AI. In December 2025, Nomura Research Institute (NRI) invited Kevin Kelly, the Editor-in-Chief of WIRED magazine, to give a special lecture entitled “The Nature of AI: What We Have Seen So Far”. Faced with the uncertainty of what will become of us as the creators of AI, what must we do to prepare for the future? We present the content of that talk here in Part 1.

The Four Uncertainties Surrounding AI

The first is whether we will see the emergence of artificial general intelligence (AGI), or whether multiple specialized AIs will be used. Kelly stated that “we have to talk about AIs, plural”, finding value in collaborations with various kinds of AI, like aliens that think differently from how humans do. The second is whether the places where AI operates will be concentrated in and dominated by large data centers, or will instead depend on decentralized local devices. The third has to do with employment, specifically with whether AI will replace human beings or will merely supplement human activities as a partner. Kelly said that “very few people have lost their job to AI”, offering his view that AI will exist to augment and complement human abilities in a partnership role. The fourth involves direction, i.e., whether AI will go the open source route or be closed. While Kelly thinks it would be ideal if AI becomes a “public good for all mankind” as the Internet has, his dispassionate analyses have led him to believe that the closed model is likely to become dominant over the next ten years.

These elements in combination will shape the future to a significant degree, and thus Kelly stressed the importance of “rehearsing” these various scenarios to be prepared for any outcome.

Four Frontiers for AI Over the Next Five Years

The first one is symbolic reasoning. It is clear that we cannot reach the next step with our current LLMs (large language models) alone. We must take an approach that sees intelligence as being, so to speak, a “compound” made up of various elements, to be combined with classical AI models that excel at logical deduction, thereby engineering new possibilities and broadening the scope of AI’s potential.

The second one is spatial AI. Current AIs know texts written about the world, but they do not know the world itself. There is a divide between that kind of semantic AI and the kind of AI that is trained to operate in the physical world, like for autonomous vehicles. By bridging this divide, we can empower AIs to perceive how the real world works, and to create a new world. A good example of this is augmented reality made possible by smart glasses.

The third one is emotional AI. “I think robots that seem to have emotions will have a bigger impact on us than ‘intelligent’ AI will”, Kelly said. Humans will be able to form real relationships with these AI counterparts, even if they are synthetic or virtual in nature. If we can create AIs that understand emotions, they may very well become good partners not just for troubled teens, but for mature adults too.

The fourth and final one is AI agents that behave autonomously. For example, think of smart glasses that detect everything about us, listening to our conversations, picking up the trembling of our skin and other body language, and so forth, with thousands of agents working together to anticipate what we want and then doing it. Most of them would probably be invisible to us and operate out of sight, like with back office operations or plumbing.

Kelly foresees the emergence of new “AI economic spheres”, stating that “in an AI economy where agents cooperate with each other, we may see economic spheres even larger than the ones we humans know, with the bots paying each other in value, for instance”. On the other hand, as Kelly went on to say, this would also give rise to new concerns like agent ownership, where responsibility lies, and issues of trust.

Choose Optimism!

With so many things unfolding at a rapid pace, a stance that favors a “slow takeoff” would be effective. This requires taking the time to fully absorb what already exists, and make adjustments to achieve ideal workflows and organizational setups. Companies can spend a lot of their money to deploy AI, and yet still fail at first. And as he explains, adopting AI requires being committed to following the “rule of three” (i.e., finally succeeding on the third try), and making continuous attempts without fear of failure.

Kelly believes that AI will enhance human work, rather than replace it. There are three reasons to employ human beings: performing tasks, being accountable, and continuous learning. Of these three, the only one that today’s AI can accomplish is performing tasks. “You won’t lose your job to AI. But you might lose it to someone who’s able to use AI well”, he said. The key thing will be how to build outstanding partnerships with AI.

“This means imagining a bright future, and having faith that it’s possible to achieve it. Optimism isn’t a matter of personal character — it’s a choice. The present was built by the optimists of the past. I hope you all will also choose optimism and create that future”, Kelly said, concluding his talk.