Daisuke Takagi and Misuzu Takahashi, IT Management Consulting Department

The use of AI is rapidly spreading in the realm of corporate activities, yet governance often cannot keep pace with the changes. The risks lurking behind its conveniences are of a different nature from those of conventional IT, and once they materialize, they can have fatal consequences for business management. And given the need to comply with stricter regulations on a global scale, such as the EU AI Act, how should Japanese enterprises face the issue of AI governance?

NRI members Daisuke Takagi and Misuzu Takahashi, who specialize in helping clients build AI governance structures, suggest that management must take the lead in creating organizations such as an “AI Center of Excellence (CoE)”, and must shift to an “aggressive governance” that strikes the right balance between AI promotion and control.

AI Poses New Management Responsibilities, and Not Knowing Them Comes with Costs

――In an era that demands accountability, what kinds of risks are companies facing by promoting the use of AI without a governance framework that can keep up?

Takagi : With AI now being incorporated into various businesses and operations, management-related risks are growing beyond what we’ve seen thus far. These come in various forms, including the dissemination of misinformation caused by hallucinations, unintended rights violations (copyright infringement, etc.), and cyberattacks targeting AI systems (e.g., prompt injections attacks, model theft attacks, data poisoning). Depending on the industry type, these risks potentially could even have an effect on human lives, and so the impact on management is becoming even greater than it ever was with traditional IT.

――Where do AI’s unique risks lie?

Takagi : AI can be highly “opaque”, which makes it difficult to say how or why it arrived at a certain determination. And once any faulty decision logic or any inappropriate data enters the system, it can affect every domain of a company’s operations, including decision-making, customer service, and so on. Instead of a one-off system failure, this could throw the very quality of a company’s decisions into question.

Takahashi : When we treat AI in the same sense as conventional IT, we tend to conclude that the risks aren’t quite that serious. However, the more widespread AI use becomes, the more unacceptable it is for management to fail to grasp the “what”, the “how”, and the “where” of AI use. In an era when accountability is in such demand, the very act of using AI without having a governance framework in place is itself a serious management risk.

Linking Management and the Workplace, and Strategically Narrowing Down the Areas to be Protected

――Many companies also have a hard time deciding on a management level just how far they should tolerate the risks posed by evolving technologies. What sorts of situations are arising in the workplace?

Takahashi : Two extreme situations are happening at the same time. One is that with no definitive rules on AI use or dedicated staff to consult, workers will make decisions on AI use on the spot with only a vague awareness of the risks, and this leads to a “shadow AI” phenomenon. The other is that senior management is so fearful of AI’s risks that it ends up making the rules too rigid, and so the AI becomes practically unusable. These two extremes are happening concurrently at many companies.

――Some companies also seem to misinterpret AI governance to mean rules for preventing AI use. What is it actually important for management to decide?

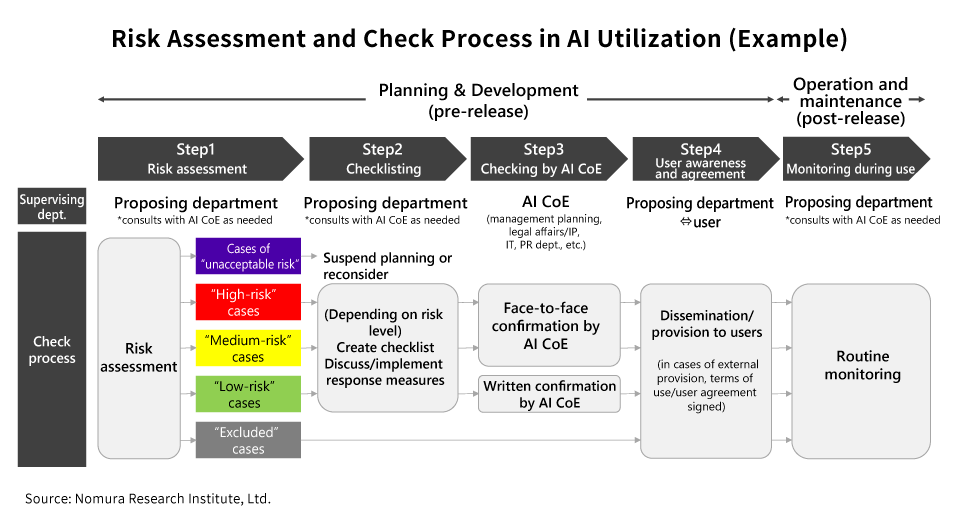

Takagi : The important thing is for management to decide on baseline premises not simply for restricting the use of AI, but for using it safely and securely. To that end, the first thing is to classify the risks according to work operations and applications. For instance, this could mean classifying anything that directly controls factory lines or social infrastructure as high-risk, anything related to sharing information with the public as medium-risk, and anything involving the efficiency of internal office operations like preparing meeting minutes as low-risk. Then, they have to set degrees of risk tolerance to specify how far the risks at each of those levels can be tolerated (e.g., acceptable limits, essential checks and approvals, oversight and monitoring standards). This isn’t an individual determination to be made on the spot; it’s a kind of decision-making for which management is responsible. Senior management has to be committed to drawing these lines, or else governance will ultimately be a mere formality.

Takahashi : If those at the top clearly spell out the policy, those on the ground will be able to do their jobs more confidently. Conversely, if messaging from the management remains ambiguous, workplace operations will tend to atrophy, or AI use will be left to the workers’ own judgment. Leadership by senior management is essential in order to strike the right balance between promoting AI and managing the risks.

――What matters the most when it comes to designing mechanisms both for accelerating AI use and for curbing the risks?

Takahashi : The areas to be classified as high-risk will vary depending on the nature of a company’s business. Each company has to fully discuss the standards by which to judge factors affecting the risks, including its established risk management rules and the features of its business, and then draw clear lines between where speed should be prioritized and where countermeasures become necessary. That balance has to be struck on a continual basis.

Takagi : It’s important for companies to think not only about defensive risks, but also about the loss of opportunity that comes with not using AI. That means considering how the decision not to use AI will affect their cost structure, their decision-making speed, their customer experience, and their competitive capabilities in the future. The key is to determine where they can rapidly try out new things and where they should enhance controls, as measured against their management strategy.

Making Management Decisions Work on the Ground

――What kinds of frameworks or measures are needed to ensure that management decisions become entrenched in the governance at work on the ground?

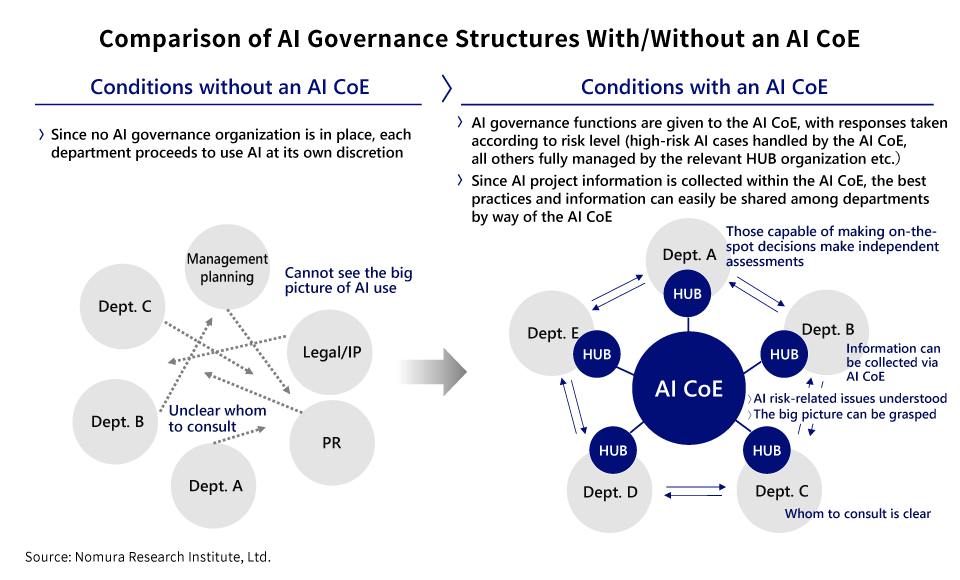

Takahashi : First, it’s important to establish an “AI Center of Excellence (CoE)”, as a sort of cross-organizational command center. Even if this isn’t a dedicated outfit depending on your company’s scale, you need an organization equipped with that function.

This isn’t something that can be fully handled by an IT department. Its function needs to be bringing key personnel from legal affairs, security, and the business units together and gathering both “defensive” and “offensive” knowledge at a single table. What those on the ground need isn’t rules in the sense of legal provisions, but rather principles translated into guidelines showing how AI specifically should be used in one’s own operations. An AI CoE’s role is one of conversion, which means taking the risk tolerance degrees determined by senior management and embodying them in concrete rules and tools that those on the ground can use intuitively. Furthermore, this organization shouldn’t be a watchdog like the police, but a companion or an escort that solves problems on-site. Instead of rejecting a certain way of using AI, it will offer alternatives on how it can be used safely. This approach allows on-site knowledge to be collected naturally within the AI CoE, and as a result, it prevents governance from becoming merely a formality, and raises the level of AI literacy for the organization as whole.

Takagi : That said, as projects involving the use of AI multiply, it won’t be realistic for an AI CoE to keep watching all of them. Companies will need to develop human resources capable of making autonomous decisions in each department, while also making their governance itself more efficient. By adopting a model in which high-risk cases involve consultation with the AI CoE, while medium-risk and lower-level cases get handled by a hub organization in the relevant department, you can reduce the burden on your AI CoE, and more easily build an effective governance system in every workplace.

――It sounds like AI governance isn’t something that’s over once it’s been designed.

Takagi : AI technology itself is evolving at an extremely high speed, and the risk assumptions are also continually changing along with it. You can’t just sit back once your guidelines are created; you have to assume that there will be a need to keep reviewing and updating them as operations proceed. By extension, we’ll likely see more cases going forward in which AI acts autonomously without waiting for instructions from a human, like with AI agents. If that happens, we’ll start to see situations that can’t be addressed with fixed rules that have envisioned every possible scenario beforehand. That’s precisely why it’s so important to design the actual process in advance for knowing what decisions to make when exceptions occur.

Takahashi : My sense is that rather than amassing more and more detailed rules, if you instead communicate the right decision-making approach and the consultation process, in many cases it will ultimately help your workplace function better. This requires having a governance structure that can operate flexibly, in keeping with environmental changes.

――In that context, what sort of stance does senior management have to take?

Takagi : It’s important for management’s attitude to be not to demand perfection from the start, based on the assumption that the technology will evolve. This means continuing to consider things as operations run along, and making updates as needed. If management indicates these intentions, then the whole organization will run more smoothly.

Takahashi : Not using AI is already not an option. Putting an AI governance structure in place is not purely defensive — it’s for supporting offensive strategies. If management can clearly demonstrate that awareness, I believe it will help create an organization where those on the ground can make their own decisions and take action accordingly.

Profile

-

Daisuke TakagiPortraits of Daisuke Takagi

IT Management Consulting Department

Daisuke Takagi joined Nomura Research Institute (NRI) in 2013 after receiving his master's degree from the Graduate School of Science and Technology at Keio University. That same year, he was seconded to NRI SecureTechnologies, where he worked in security consulting. Since returning to NRI in 2019, he has been providing consulting services in the fields of digital transformation and IT management, with a focus on digital and IT strategy, organizational change, human resource development, and the promotion of DX (Digital Transformation).

-

Misuzu TakahashiPortraits of Misuzu Takahashi

IT Management Consulting Department

Misuzu Takahashi joined Nomura Research Institute (NRI) in 2009. She has an extensive background in system development, project management, and system operations and maintenance for the manufacturing and logistics industries. In recent years, she has been providing IT management consulting services across a wide range of industries. Her areas of expertise include business and system transformation planning, IT strategy, human resource development, and IT governance.

* Organization names and job titles may differ from the current version.